Machine learning accelerates cosmological simulations

A universe evolves over billions and billions of years, but researchers have now developed a way to create a complex simulated universe in less than a day. The technique, published in Proceedings of the National Academy of Sciences, brings together machine learning, high-performance computing and astrophysics -- and will help usher in a new era of high-resolution cosmology simulations. The U.S. National Science Foundation funded the research.

Cosmological simulations are an essential part of teasing out the many mysteries of the universe, including those of dark matter and dark energy. But until now, researchers faced the common conundrum of not being able to have it all -- simulations could either focus on a small area at high resolution or encompass a large volume of the universe at low resolution.

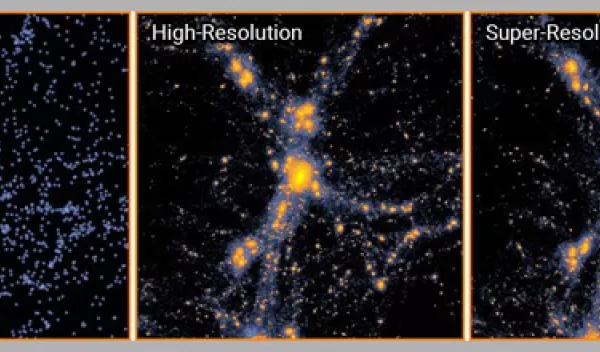

Carnegie Mellon University physicist Tiziana Di Matteo and colleagues surmounted this problem by teaching a machine learning algorithm based on neural networks to upgrade a simulation from low resolution to super resolution.

"It's clear that AI is having a big effect on many areas of science, including physics and astronomy," said James Shank, a program director in the U.S. National Science Foundation's Division of Physics. "Our AI Planning Institute program is working to push AI to accelerate discovery. This new result is a good example of how AI is transforming cosmology."

The trained code can take full-scale, low-resolution models and generate super-resolution simulations that contain up to 512 times as many particles. For a region in the universe roughly 500 million light-years across that contains 134 million particles, existing methods would require 560 hours to churn out a high-resolution simulation using a single processing core. With the new approach, the researchers need only 36 minutes.

The results were even more dramatic when more particles were added to the simulation. For a universe 1,000 times as large, with 134 billion particles, the new method took 16 hours on a single graphics processing unit. Using current methods, a simulation of this size and resolution would take a supercomputer months to complete.